Why can’t we just use vector-based icons in our application? This question, in a variety of reinterpretations, keeps on resurfacing in various forums, blogs and articles. It is asked in the context of desktop applications where the same icon is used in different ways, starting from very small 16*16 icons in file lists, up to 128*128 icons used on the dock and all the way to 512*512 icons that mostly serve to highlight the artistic capabilities of icon designers, at least until the computing world is taken over by 1200dpi desktop displays. It is also asked in the context of native mobile applications for the Android platform, where a single vector icon (in SVG format) is proposed as the replacement for multiple versions of the same icon, each optimized for the specific density bucket (usually medium and high).

At first glance, both designers and developers only stand to gain from switching to creating their icons in vector format. A designer is going to create a single version of the icon in his tool of choice, hand it off to the developer to add to the project structure, and have the runtime scale the combined shapes of the icon to whatever context it is used in – be it the small icons for the action bar, or an extra large icon for the “about” page. In fact, a lot of comments on the articles and blog posts referenced later in this entry indicate that this approach works quite well. At least, technically. And so, to summarize the feeling of discontent, Y U NO SVG?

A few days ago I invited one of our visual designers to lunch and asked him about the general process of creating icons for multiple screen resolutions (for the core Android experience). The answer was, at least to me, quite surprising. The first stage happens in a sketchbook or on a whiteboard, where different ideas, shapes and combinations are explored to find the iconography that works well within the overall direction of the platform, while still providing a distinctive shape and form for the specific action or object represented by the icon. Then the flow transitions to the computer, with Adobe Illustrator and Fireworks being the most popular choices. There, the designers create the “master” version of the icon – in a vector format. This version is scaled down to all target resolutions (medium, high, sometimes low and, most recently, extra high), and this is where the fun begins. This is where the designer looks at the scaled down version of the icon, for each resolution, and begins a sometimes painstaking process of pixel-perfecting the visuals.

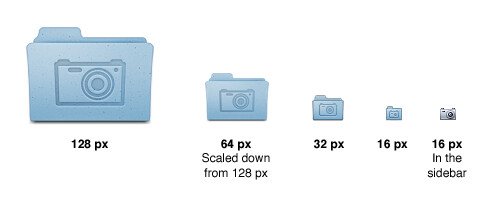

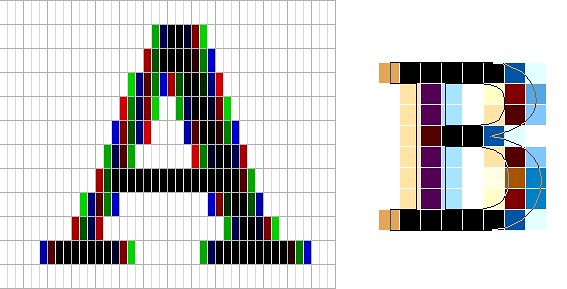

There’s a lot of art and a lot of craft involved in creating and maintaining a consistent visual iconography language within the specific application, and across the entire platform. Lines should be sharp, rounded corners should have consistent curvature, lighting and gradients should have consistent direction and amplitude. In addition, icons at smaller resolutions should not have too much visual detail, while icons at higher resolutions should not feel too sparse. This is illustrated in the “Icon Design: Bitmap vs Vector” article by Firewheel design:

The top row shows the hand-optimized versions of the same application icon at four different resolutions. The bottom row shows icons mathematically scaled from a single source. If you compare the relative sizes and detail complexity of various parts of the icons in the top row, you will see that they don’t scale at the same rate. Some parts grow linearly with the icon size, while some grow at a much slower rate. This is further illustrated in “All the sizes of iOS app icons” by Neven Mrgan:

It’s simply not possible to create excellent, detailed icons which can be arbitrarily scaled to very small dimensions while preserving clarity. Small icons are caricatures: they exaggerate some features, drop others, and align shapes to a sharp grid. Even if all icons could be executed as vectors, the largest size would never scale down well.

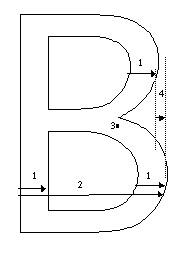

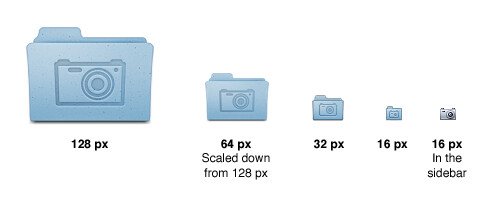

Here’s the icon for the Pictures folder in Mac OS X:

Note that scaling down works to about 64 px; after that, shapes have to be redrawn, simpler and clearer, in order to read. The sidebar version of the icon is entirely different, in fact; since we know it will be shown in the sidebar, it’s not so important that it look like a folder, and other features can be emphasized instead. Creating the large icon as a vector shape –which, to be clear, you should be doing! – won’t help where clarity is really needed: at small sizes. High-resolution displays will in fact make this problem more urgent because today’s 64 px is tomorrow’s 128 px. We’ll have to refine ever larger icons.

Dave Shea takes a closer look at the mechanics of optimizing the original shapes and lines for smaller size in the “Icon Design: Sizing“:

The solution is to start with the reduced version, and tweak it at the individual pixel level. Make the details fit within the pixel grid, remove extra detail that’s causing blur, or even add extra detail if it helps you get to your end goal. Whatever it takes, the solution is to provide a critical eye to the problem and tweak until you get a result you’re happy with, which is why the size variations are so much extra work.

In the calendar above, you’ll notice what I’ve tweaked the two different sizes so the inner boxes end up with whole pixel values on either side. To do this I’ve had to reduce the size of the boxes at 24×24, and actually create more boxes at 16×16. I couldn’t come up with a combination of 4 columns with a 1 pixel wide border that would fit within the space allotted at that smaller size, the only workable combination I found involved adding an extra column and dropping a row. The icon is a bit different than the 32×32 equivalent, but it’s clearly derived from the larger one and works as an acceptable size variation.

Additional examples of small icons moving shapes around and even “losing” some of them can be seen in a variety of modern applications and UI toolkits. Here is an example from the widely-lauded iA Writer for Mac application:

While the central element – a slanted sky blue caret – preserves the overall shape, angle and gradient, the text next to it begins “losing” characters the closer you get to 32*32 size. The 16*16 icon is just the caret, with no characters next to it.

The same approach to simplifying the shapes, textures, perspective and density can be seen in the system icons introduced in GNOME 3.0:

If you trace the progression of the transition to smaller icon sizes across these three icons (and additional icons on the original entry), you will see a consistent approach that starts stripping away dimensionality, complexity, textures, gradients and density, preserving not only the overall shape and feel of the icon, but also the consistency of iconography language across all icons of the same size.

If you do not wish to spend extra time to pixel-perfect your icons at smaller sizes, using a single-source vector format as the “master” and scaling down to any arbitrary size is a perfect fit for SVG. In this context, the following quote from the self-titled “Graphics Ninja” Zack Rusin talking about SVG in KDE is quite illuminating:

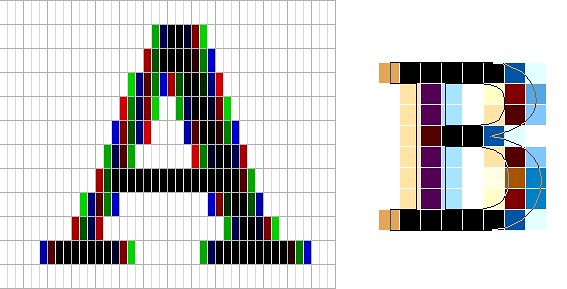

The loss of quality in vector graphics at small size is a severe problem. Rendering vector graphics primitives at low resolutions introduces a certain amount of blur into the output. This is mainly caused by horizontal and vertical primitives which happen to fall between pixel boundaries, which in turn makes the anti-aliasing algorithms try to cope with it by rasterizing two, instead of one rows/columns but at a lower color intensity. For primitives which are rendered at small sizes the goals of “resolution independence” and “preserving their good looks across resolutions” diverges a lot. We have the former, we need the latter.

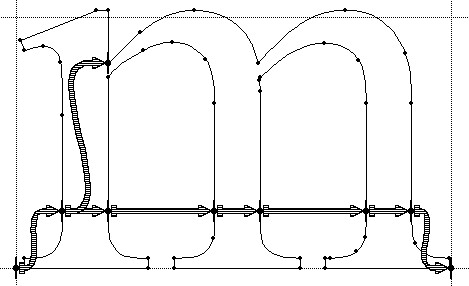

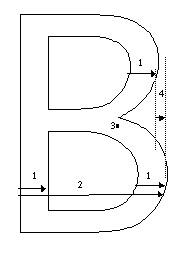

One of the ways of dealing with this problem is hinting. The problem of hinting vector graphics primitives has been extensively researched by the way of font technologies. Grid-fitting (aka. “font hinting”) is a crucial step on the way to produce legible output at small sizes for a lot of fonts. Hinting can be manual (e.g TrueType has a stack-based language for it, each glyph in the font contains its own little hint program and as a result of running that program control points for the outlines can be adjusted in any way the creator of the hints desired) or automatic (as used by FreeType). An interesting medium is described in “Example-Based Hinting of TrueType Fonts” paper, in which a method of reusing hints from one font for another are described. All in all it’s a very common problem for fonts.

The research the engineers from the FreeType project conducted on auto-hinting is outstanding. Right now the way KDE artists go around this problem is by producing certain SVG icons with different viewport sizes. This allows them to manually adjust the rendering for certain native resolutions.

The reality of the situation is that without very high DPI displays the quality of small SVG renderings is going to suffer. A solution would involve introduction of either an auto-hinting algorithm or adding a declarative approach of specifying the hints which the artists could easily utilize. It’s a problem which affects all SVG users and should be handled in the standard itself.

There are a lot of similarities between pixel-perfecting vector graphics and auto-hinting of font glyphs. Both aim to address a very similar problem. Both operate in a flow where the master version is created under extremely high resolutions to look well in booklets, portfolios and promotional material, but versions scaled down to the “real world” use suffer from poor grid fitting, detail clutter, detail loss and blurriness. In fact, some designers go as far as proposing to forgo the standalone icons altogether and use the advanced capabilities of type engines instead. Proposed by Wayne Helman last year, it was further expanded upon by P.J. Onori in his “Font-Embedding Icons: This Is a Big Deal” article that goes on to say:

The article was well-received, but I was honestly expecting more excitement around this idea. From my view, this now seems like the way to set icons in a site. I feel strongly about the potential of this method, so I thought I would take the time to generate a font set for Iconic and to talk about why we should all be using this method for displaying icons.

Listing “one icon, infinite sizes” as one of the advantages, it seems to be a great solution, but only for duotone, or more precisely purely black and white, icons. In addition, it completely fails to address the giant elephant in the room – what to do for complex icons that do not scale well to small sizes? Type engines have two major approaches to solve this problem – embedding bitmaps and font hinting.

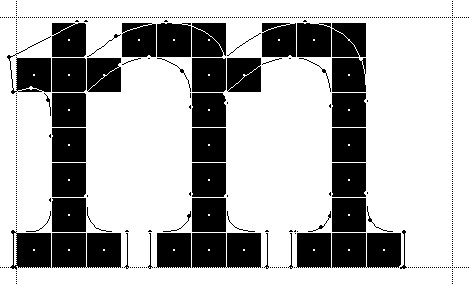

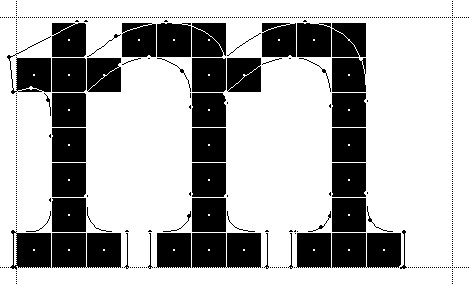

Embedding bitmaps is a rather straightforward approach. You start from a high-resolution master definition of the glyph, and identify those glyphs that do not scale down well past a certain point (lowercase ‘m’, ‘s’, ‘a’ and ‘g’ are usually among the prime suspects). For those glyphs, you hand-tweak the visuals for all target point sizes, export them as bitmaps and then embed the bitmaps as binary blobs in the font file. In fact, it can work the other way around, as detailed by Simon Earshow, a typographer at Microsoft:

In the past I’ve been burned starting from outlines and trying to be extra clever in the hinting. So I finally deciding, ‘I’m better off grasping the nettle. What’s most important is to get the bitmaps right at the sizes people use most often.’ So instead of starting with outlines and then working to hint them for the screen, I started by simply making bitmap fonts. No outlines, just bitmaps.

Bitmaps are relatively easy to make and they show exactly how the fonts will look on-screen. This allowed us to make decisions about sizes, weights, and distinctions between serif, sans, roman, italic, all viewed in context. Working this way we came up with a definition for a certain number of critical sizes and weights.

Once the key bitmaps were done, I very carefully wrapped an outline around them. I always have in mind that this outline will then be given to the person responsible for hinting–and they’ll need to be able to hint outline to get back, pixel for pixel, to the bitmap faces where we started.

Embedding bitmaps worked well on CRT monitors, but did not scale into the world of LCD monitors and subpixel rendering. This is where hinting comes into play, as summarized in this great overview by Peter Bil’ak on Typotheque:

This is exactly what hinting is about: programming instructions that fine-tune a font’s rasterisation, the process by which its mathematically ideal outlines are mapped onto a monitor’s pixels. Hinting can control the heights and widths of a font’s uppercase and lowercase letters, the widths of its individual lines, the amount of white space around letters, the size at which uppercase letters start to use different stem-widths from lowercase letters, how the angle of italic characters changes to best fit the pixel grid, and many other extremely technical details, all on a pixel-by-pixel basis. If this sounds like a rather tedious, time-consuming activity, it is, (even for type designers, who are accustomed to tedious, time-consuming activities).

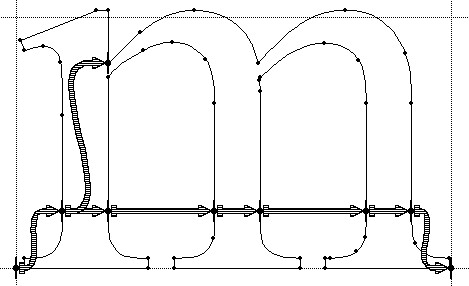

The complexities of type hinting are illustrated in “The raster tragedy at low resolution” article by Beat Stamm that gives just a small taste of what it takes to hint a single glyph – not to mention the implementation complexity of the type engine itself.

Beat Stamm even followed up with RasterTragedy.com, delving much deeper into anti-aliasing, hinting, layout and rendering across a wide spectrum of modern type engines.

To further appreciate the complexities of creating a type-hinting program for a specific glyph, you can start with this “Hello world” tutorial that hints the uppercase ‘L’, follow up with more complex examples for glyphs with curves, serifs and slanted stems, and finally revel in the full TrueType instruction set, the complexity of which rivals, if not exceeds, that of SVG itself.

Throughout the article I stayed away from the complexity of the SVG format itself, and its full implementations. There’s a simple reason – if the format is powerful enough to address the needs and requirements of designers who pay special attention to pixel-level details, it will provide a natural push to have the full implementation of that format to be included in the UI toolkits and platforms. In its present state, however, SVG is not there. Furthermore, extending SVG with capabilities similar to those of TrueType hinting instructions will not only make the full implementation much more complex. A much more important question is whether it’s going to make it easier for icon designers to create a single vector-based version of their icons?

If you’ve followed my reasoning up until now, the simple answer is no, it will not. When each icon line, each icon stroke, each icon shape need to be hinted for precise rendering under small sizes, when you need to go well beyond each individual layer to make sure that they are hinted as one collective assembly, when you need to learn an extra set of tools that will undoubtedly go beyond the current instruction set of type engines as it’ll need to support lighting, gradients, collapsing and hiding detail – this is just not a tenable solution.

As to the process of pixel-perfecting icons? Once you scaled down the master version down to all the target sizes, you can do different things. You can start moving pixels directly, at the expense of redoing the same exact thing when you go back and change the master. Or you can go back to the master and create “secondary” masters, one for each target size. Each secondary master is not meant to be used at the highest resolution, but is instead optimized to create the best pixel-level version when it is scaled down to the target size. The down side is that once the original master is changed, you have many more tweaks to do.

A final thought about the high-resolution displays and the quote above from Neven Mrgan. Making a leap of faith, let’s say that in 50 years we’ll have screens with resolution of 1200dpi (which is “only” four times the resolution of iPhone 4 and Galaxy Nexus, but sixteen times as many pixels in a square inch). In such a world, a single grain of sand will cover four 16*16 pixel icons. In fact, all the mentions of small-size icons in this article refer to the physical scale of small – not the pixel scale. To maintain a usable touch interface, an interface that can be easily scanned with a human eye, you will want to maintain the current physical scale of the icons – making them much larger on the pixel scale. The smallest icon on such a device with the current UI building blocks will be around 128*128 pixels. However, it does not automatically mean that you can all of a sudden cram all the fine details from your (even higher resolution) master icon into the available pixel space. As each pixel gets smaller, it does not mean that you want to progressively increase the detail complexity and density.

As Neven points out, clarity is king, and in such a future icon designers will have to hand-tweak even more icon sizes. And unless the future is a concept video where everybody is walking around with high-end devices that have seemingly unlimited battery life and connectivity, the feature gap between high-end and low-end devices will be even larger. And in such a future, icon designers will have to maintain multiple versions of the same pixel-size icons, each version pixel-perfected for use on a device with a specific density. But then again, in 50 years there may as well be a completely different way to present information and a completely different technology to interact with.

So no, SVG is definitely not the answer. At least not today.

Over the course of this week i’ve talked about movements of physical objects in the real world, and how they can be applied to animating pixels on the screen. The last two entries have just skimmed the surface of animating UI objects, and even such straightforward areas as color animations and scroll animations can be much deeper and more complicated than it originally seems.

Every movement in the real world is governed by the laws of physics. Sometimes these laws are simple, and sometimes they are not. Understanding and emulating these laws in the virtual world of pixels takes time. It takes time to analyze how the objects in the physical world move. It takes time to find the right physical model for the specific dynamic change on the screen. It takes time to implement this physical model in the code. It takes time to optimize the implementation performance so that it is fluid and does not drain too much device power. Is it worth it?

Is it worth spending your time as a designer? It it worth spending your time as a programmer? Is it worth spending your time as a tester? If you care about your users, the answer is a resounding yes.

People don’t read documentation. Nobody has time do to it, and it gets worse with every passing year. We are confronted with too much information, and the average attention span keeps on shrinking. A recent trend towards separation between data providers and application providers makes it incredibly simple for people to switch between different views on the data of their interest (think Twitter clients, for instance). People will start using your application, play with it for a few moments (minutes, if you’re lucky) and move on to the next shiny thing on a whim. How can you capture such a volatile audience?

Intuitive design is a popular term in the user experience community. Alan Cooper writes the following about intuition in his About Face 3:

Intuition works by inference, where we see connections between disparate objects and learn from these similarities, while not being distracted by their differences. We grasp the meaning of the metaphoric controls in an interface because we mentally connect them with other things that we have already learned. This is an efficient way to take advantage of the awesome power of the human mind to make inferences. However, this method also depends on the idiosyncratic human minds of users, which may not have the requisite language, knowledge, or inferential power necessary to make those connections.

What does it mean that the given interface is intuitive? You click on a button and it does what you expected to. You want to do something, and you find how to do it in the very first place you looked at. The only surprises that you see are the good ones. The application makes you feel good about yourself.

This is definitely not easy. And you must use every available tool that you can find. How about exploiting the user itself to make your job easier? How about building on the existing knowledge of your users and their experiences with related tools in either real or virtual domain? As quoted above, not all knowledge and not all experiences are universal, but the animations are.

We all live on the same planet, and we are all governed by the same physical laws. Applying these laws to the changes in your application (in color, shape, position etc) will build on the prior knowledge of how things work in the real world. Things don’t move linearly in the real world, and doing so on the screen will trigger a subconscious response that something is wrong. Things don’t immediately change color in the real world, and that’s another trigger. Moving objects cannot abruptly change direction in the real world, and that’s one more trigger. A few of these, and your user has moved on.

Drawing on the existing user experience is an incredibly powerful tool – if used properly. Some things are universal, and some things change across cultures. Distilling the universal triggers and transplanting them to your application is not an easy task. It requires a great deal of time and expertise from both the designers and programmers. And if you do it right, you will create a friendly and empowering experience for your users.

In a roundabout way, this brings me to the visual clues that are pervasive throughout the Avatar movie. If you haven’t seen it yet, you may want to stop reading – but i’m not going to reveal too much. Our first exposure to the Neytiri – the native Na’vi – is around half an hour into the movie. Apart from saving Jake’s life, she is quite hostile, and she does not hide it from him. What i find interesting is how James Cameron has decided to highlight her hostility. It is not only through her words and acts, but also through the body language, the hand movements and the facial expressions. They are purposefully inhuman – in the “not regularly seen done by humans” sense of word. The way she breaks the sentences and moves her upper body with the words, the movement of facial muscles when she tells him that he doesn’t belong there, and the hand gestures used throughout their first encounter certainly do not make the audience relate to her. On the contrary, the first impression reinforced by her physical attitude is that of hostility, savageness and animosity.

In a roundabout way, this brings me to the visual clues that are pervasive throughout the Avatar movie. If you haven’t seen it yet, you may want to stop reading – but i’m not going to reveal too much. Our first exposure to the Neytiri – the native Na’vi – is around half an hour into the movie. Apart from saving Jake’s life, she is quite hostile, and she does not hide it from him. What i find interesting is how James Cameron has decided to highlight her hostility. It is not only through her words and acts, but also through the body language, the hand movements and the facial expressions. They are purposefully inhuman – in the “not regularly seen done by humans” sense of word. The way she breaks the sentences and moves her upper body with the words, the movement of facial muscles when she tells him that he doesn’t belong there, and the hand gestures used throughout their first encounter certainly do not make the audience relate to her. On the contrary, the first impression reinforced by her physical attitude is that of hostility, savageness and animosity.

The story, however, requires you to associate with the plight of the locals, and root against the invasion of the humans who do not understand the spiritual connectivity of the Na’vi world. The love interest between Jake and Neytiri is a potent catalyst, and it is fascinating to see how Cameron exploits the human emotions and makes you – in words of Colonel Quaritch – betray your own race. If you have seen the movie, imagine what it would feel like if Na’vi looked like real aliens – from Ridley Scott / David Fincher / the very same James Cameron saga. It would certainly cost much less money to produce, but would you feel the same seeing two ugly aliens falling in love and riding equally ugly dragons?

Na’vi look remarkably humanoid – just a little taller. The only outer difference is the tail and the number of fingers. Other than that – a funky (but not too funky) skin color, the same proportion of head / limbs / torso, the same facial features, no oozing slime and the same places where the hair doesn’t grow. It has certain technical advantages – mapping the movements of real actors onto the Na’vi bodies – including the facial expressions. In Avatar, however, there is a much deeper story behind the facial expressions. Cameron starts building on your prior experience with outward expression of human emotion in order to build your empathy towards Na’vi cause, and make you root for them in the final battle scene. How likely is it that Na’vis have developed not only the same body structure, but the same way positive emotions are reflected with facial expressions?

Na’vi look remarkably humanoid – just a little taller. The only outer difference is the tail and the number of fingers. Other than that – a funky (but not too funky) skin color, the same proportion of head / limbs / torso, the same facial features, no oozing slime and the same places where the hair doesn’t grow. It has certain technical advantages – mapping the movements of real actors onto the Na’vi bodies – including the facial expressions. In Avatar, however, there is a much deeper story behind the facial expressions. Cameron starts building on your prior experience with outward expression of human emotion in order to build your empathy towards Na’vi cause, and make you root for them in the final battle scene. How likely is it that Na’vis have developed not only the same body structure, but the same way positive emotions are reflected with facial expressions?

Our ability to relate to other human beings is largely based on our own experiences of pain, sorrow, joy, love and other emotions. Neytiri displays remarkably human like emotions – especially throughout the Ikran taming / flying scene half way into the movie. Cameron uses our own human emotions to guide us into believing in Na’vi cause, and this is achieved by building on the universally human vocabulary of facial expressions. To believe the story, we must believe in the characters, and what better way to do so than making us associate with both sides of the relationship between Jake and Neytiri.

Make your users productive. Make them happy that they have spent time in your application. Make them want to come back and use your other products. Make an emotional connection. Build on what they know. Make them believe that every choice they make is their own. Or better yet, guide them towards where you want them to go while making them believe that they are in charge.

After seeing how the rules of physical world can be applied to animating colors, it’s time to talk about layout animation. If you’re a programmer, it’d be safe to say that you spend most of your waking hours in your favorite IDE – and if you’re not, you should :) Writing and modifying code in the editor part of IDE takes, quite likely, most of the time you spend in the IDE. A quite unfortunate characteristic of such an environment is that the UI around you is highly static. Of course, you see bunch of text messages being printed to different views, or search view being populated with the search results, or problems view showing new compile errors, or maybe you invoke a few configuration dialogs and check a couple of settings. All of these, however, do not change the layout of the views that you are seeing.

The rest of the world spends their time outside IDE, browsing their Twitter / Facebook / … streams, updating the Flickr / Picasa albums, downloading songs on iTunes, tuning into Pandora, making selections in the Netflix queues and what not. These activities are highly dynamic, and feature “information tiles” – data areas that display information on the status, pictures, songs, or movies that are relevant to the current view. While the amount of available items is certainly finite, it does not – and should not – fit on a single screen. Thus, activities such as searching, filtering and scrolling are quite common. Let’s take a look at one such activity.

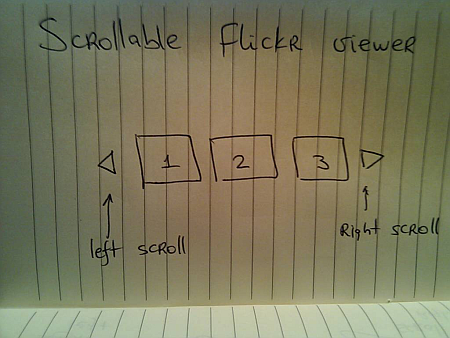

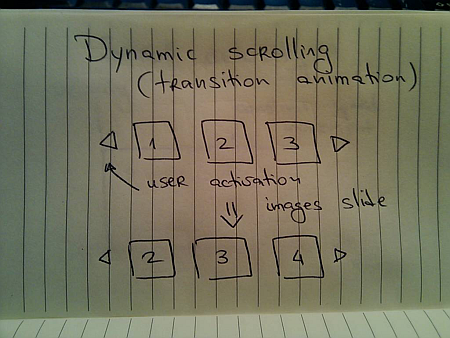

Suppose you have a scrollable widget that shows three pictures from your favorite Flickr stream in your blog footer:

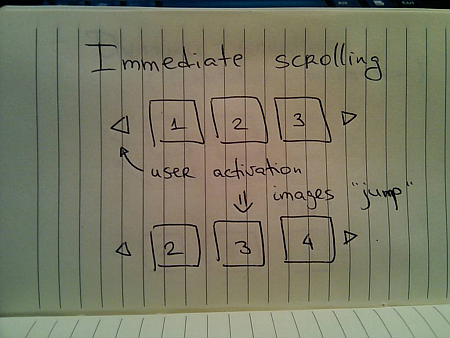

Here, the user can activate the left / right scroll buttons to view the next / previous batch of pictures – scrolling either one by one, or page by page. One option is to scroll immediately – as soon as the user activates the scroll button, the images just “jump” to the new locations:

Simple to implement, but very user-unfriendly. You’re making the user remember the previous state of the application – where was each image displayed, and make the mental connection between old state, pressing the button and the new state. It would be fairly correct to say that immediate scroll is a persona non grata in the RIA world:

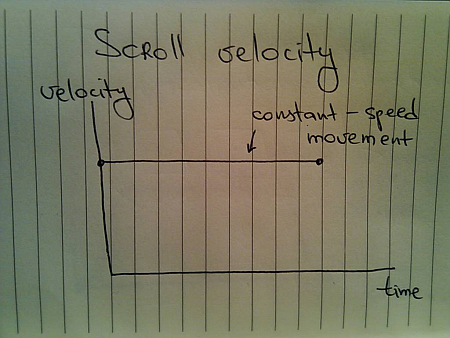

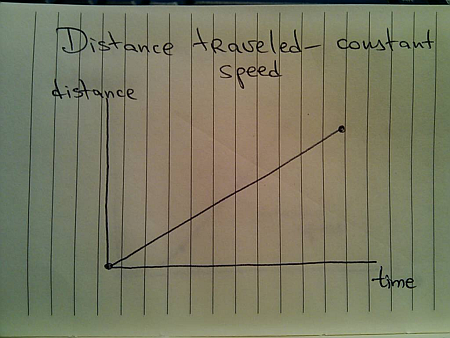

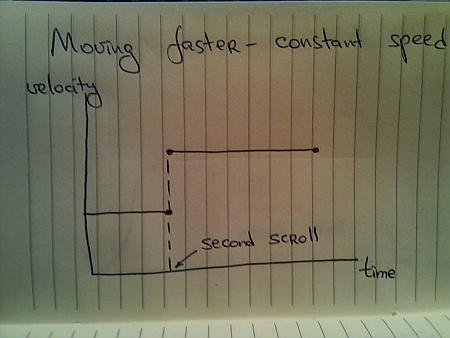

Now that you know that you want to slide the images, the next question is how to do it. Going back to the previous entries, a typical programmer’s solution is constant-speed scroll:

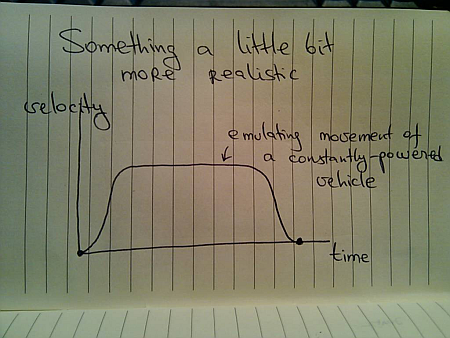

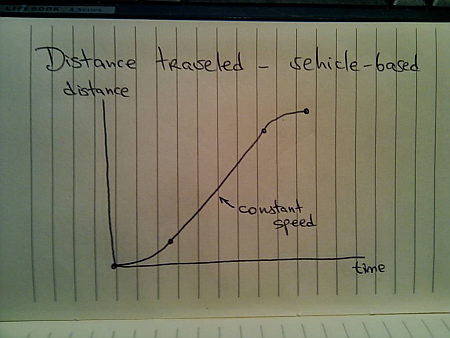

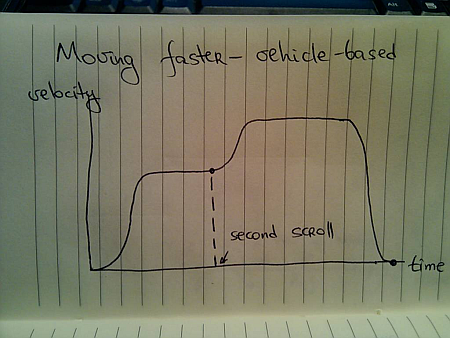

If you’ve followed this series so far, you know that this will not feel natural since it does not properly emulate movement of physical objects. How about emulating the movement of a man-driven vehicle:

Here, we have a short acceleration phase, followed by the “cruising” scrolling, with the eventual deceleration as the scrolling stops. Is this the correct model? Not so much – the cruising of the physical vehicle happens because you’re constantly applying the matching pressure on the gas pedal (or let the cruise control do it for you).

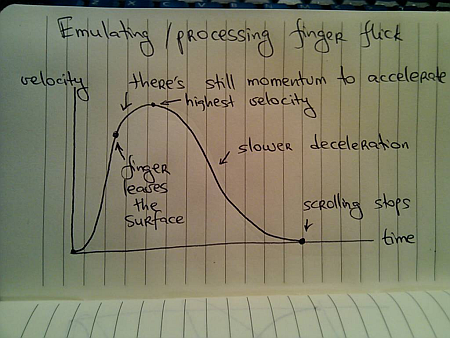

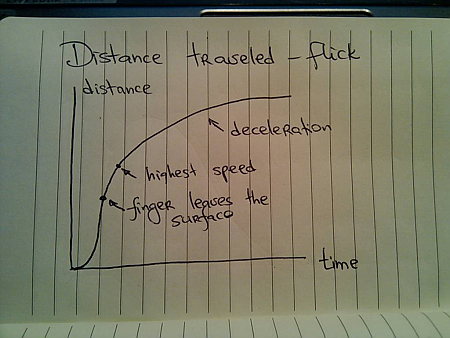

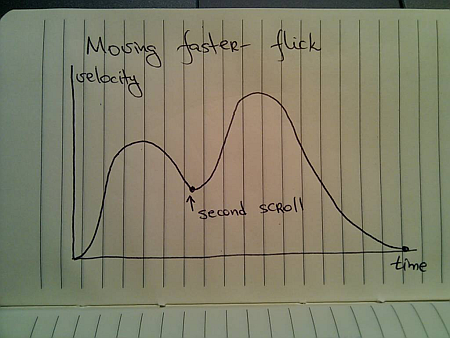

In this case, the user had a rather short interaction with the UI – clicking the button. The scrolling continues well beyond the point of the press end, and as such there is nothing that can “power” this constant-speed movement. A better physical model is that of the finger flick – a common UI idiom in touch-based devices such as smart phones or tablets:

Here, you have very quick acceleration phase as the user starts moving the finger on the UI surface, followed by a slower acceleration attributed to the momentum left by the finger. Once the scrolling reaches its maximum speed, it decelerates – at a slower rate – until the scrolling stops.

Let’s take a look at the distance graphs. Here’s the one for the constant-speed movement:

Here’s the one for the vehicle-emulating movement:

And finally, here’s the one for finger-flick model:

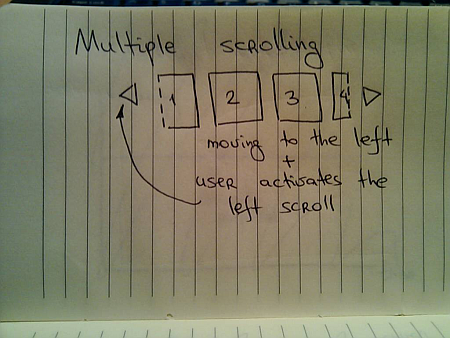

Now let’s take a look at what happens when the user initiates another interaction while you’re still processing (animating) the previous one. You cannot ignore such scenarios once you have animations in your UI – imagine if you wanted to go to page 200 of a book and had to turn the pages one by one. In the real world, you do not wait until the previous page has been completely turned in order to turn the next page.

The same exact principle applies to scrolling these information tiles. While you’re animating the previous scroll, the user must be able to initiate another scroll – in the same or the opposite direction. If you have read the previous entries, this directly relates to switching direction in the physical world.

Suppose you’re in the middle of the scrolling animation:

And the user presses the left scroll button once again. You do not want to wait until the current scroll is done. Rather, you need to take the current animation and make it scroll to the new position. Here, an interesting question arises – do you scroll faster?

For the specific scenario that is used here – scrolling the images – you have different options. You can maintain the same scrolling speed, or you can increase the scrolling speed in order to cover more “ground” in the same amount of time. Let’s take a look at some numbers.

- Suppose it takes 1000ms to make the full scroll.

- 800ms after the scroll has started, the user initiates another scroll in the same direction.

What are the options?

- Maintain the same scroll speed, effectively completing the overall scroll in 2000ms.

- Complete the overall scroll in the same time left for the first one – effectively completing the overall scroll in 1000ms.

- Combine the distance left for the first scroll and the full distance required for the second scroll, and complete the combined distance in 1000ms – effectively completing the overall scroll in 1800ms.

There is no best solution. There is only the best solution for the specific scenario. Given the specific scenario at hand – scrolling images, i’d say that option 1 can be ruled out immediately. If the user initiates multiple scrolls, those should be combined into faster scrolls. What about option 2? It’s certainly simpler than option 1, but can result is unsettlingly fast – or almost immediate – scrolls the closer the second scroll initiation gets to the completion of the first scroll. In our case, the second scroll initiation came 200ms before the first scroll was about to be completed. Now, you cover the remaining distance and the full distance for the second scroll in only 200ms. It is going to be fast, but it can get too fast.

If we adopt the third option for this specific scenario, the velocity graph for the constant-speed scrolling looks like this:

For the vehicle-based model it looks like this:

And for the finger flick based model it looks like this:

These two entries (on colors and scrolling) have shown just a glimpse of complexity brought about by adding dynamic behavior to your UIs. Is spending time on proper design and implementation of animations based on the real world justified, especially when the animations themselves are short? This is going to be the subject of the final part.

To be concluded tomorrow.

In a roundabout way, this brings me to the visual clues that are pervasive throughout the Avatar movie. If you haven’t seen it yet, you may want to stop reading – but i’m not going to reveal too much. Our first exposure to the Neytiri – the native Na’vi – is around half an hour into the movie. Apart from saving Jake’s life, she is quite hostile, and she does not hide it from him. What i find interesting is how James Cameron has decided to highlight her hostility. It is not only through her words and acts, but also through the body language, the hand movements and the facial expressions. They are purposefully inhuman – in the “not regularly seen done by humans” sense of word. The way she breaks the sentences and moves her upper body with the words, the movement of facial muscles when she tells him that he doesn’t belong there, and the hand gestures used throughout their first encounter certainly do not make the audience relate to her. On the contrary, the first impression reinforced by her physical attitude is that of hostility, savageness and animosity.

In a roundabout way, this brings me to the visual clues that are pervasive throughout the Avatar movie. If you haven’t seen it yet, you may want to stop reading – but i’m not going to reveal too much. Our first exposure to the Neytiri – the native Na’vi – is around half an hour into the movie. Apart from saving Jake’s life, she is quite hostile, and she does not hide it from him. What i find interesting is how James Cameron has decided to highlight her hostility. It is not only through her words and acts, but also through the body language, the hand movements and the facial expressions. They are purposefully inhuman – in the “not regularly seen done by humans” sense of word. The way she breaks the sentences and moves her upper body with the words, the movement of facial muscles when she tells him that he doesn’t belong there, and the hand gestures used throughout their first encounter certainly do not make the audience relate to her. On the contrary, the first impression reinforced by her physical attitude is that of hostility, savageness and animosity. Na’vi look remarkably humanoid – just a little taller. The only outer difference is the tail and the number of fingers. Other than that – a funky (but not too funky) skin color, the same proportion of head / limbs / torso, the same facial features, no oozing slime and the same places where the hair doesn’t grow. It has certain technical advantages – mapping the movements of real actors onto the Na’vi bodies – including the facial expressions. In Avatar, however, there is a much deeper story behind the facial expressions. Cameron starts building on your prior experience with outward expression of human emotion in order to build your empathy towards Na’vi cause, and make you root for them in the final battle scene. How likely is it that Na’vis have developed not only the same body structure, but the same way positive emotions are reflected with facial expressions?

Na’vi look remarkably humanoid – just a little taller. The only outer difference is the tail and the number of fingers. Other than that – a funky (but not too funky) skin color, the same proportion of head / limbs / torso, the same facial features, no oozing slime and the same places where the hair doesn’t grow. It has certain technical advantages – mapping the movements of real actors onto the Na’vi bodies – including the facial expressions. In Avatar, however, there is a much deeper story behind the facial expressions. Cameron starts building on your prior experience with outward expression of human emotion in order to build your empathy towards Na’vi cause, and make you root for them in the final battle scene. How likely is it that Na’vis have developed not only the same body structure, but the same way positive emotions are reflected with facial expressions?